🔥Teaching Agentic Strands Agents to assume Cross-Account IAM Roles and Read AWS Resources🔥

aka, which VMs are running hot on CPU? Or out of disk space?

This blog series focuses on presenting complex DevOps projects as simple and approachable via plain language and lots of pictures. You can do it!

These articles are supported by readers, please consider subscribing to support me writing more of these articles <3 :)

Hey all!

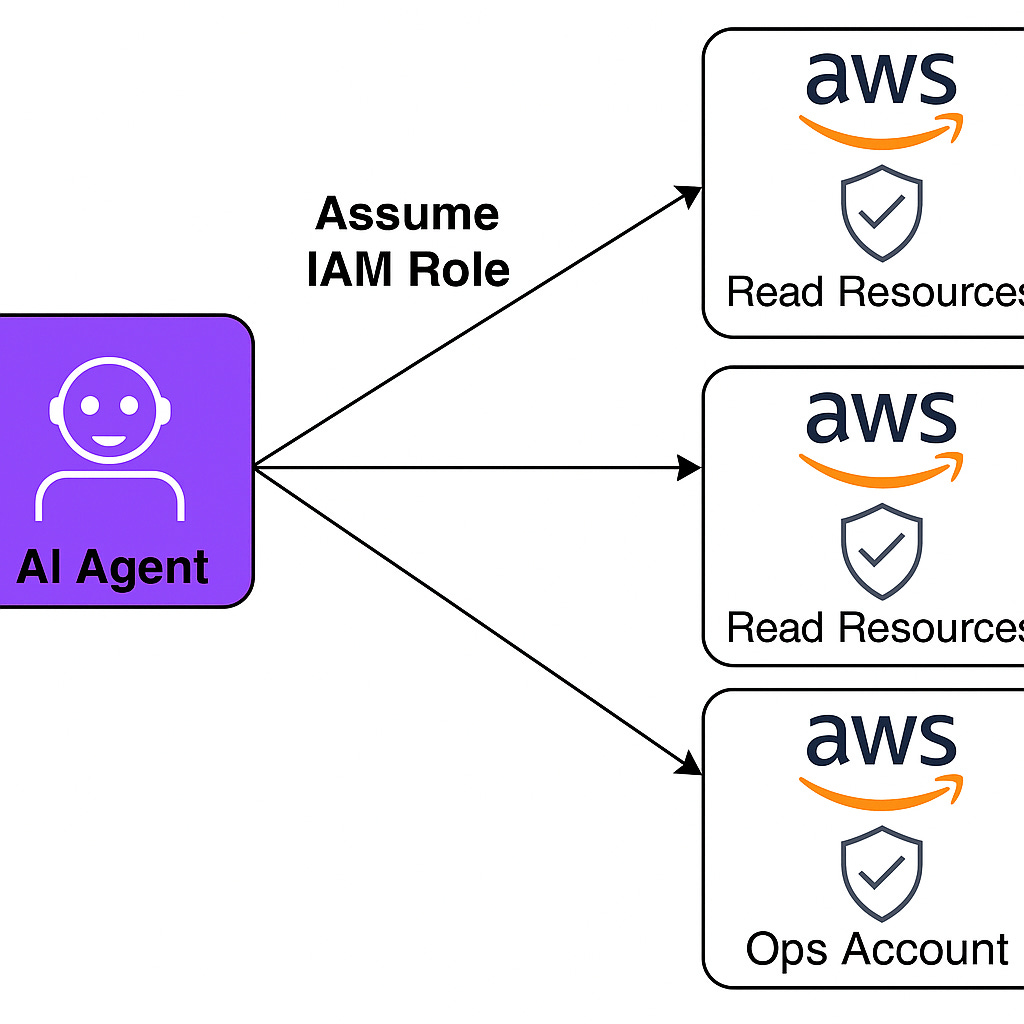

In this blog post, we’re going to integrate the AWS Labs CLI MCP server with a Strands-powered AI agent running in Lambda, with support for querying resources across multiple AWS accounts using profile-based role assumption.

The specific challenge: we need our AI assistant to query AWS resources across many different accounts (dev, staging, prod, ops, etc.)… with an MCP server that appears built to support a single authentication profile, in a single account.

The Multi-Account Challenge

When you ask an AI agent “List the EKS clusters in the Prod account,” the agent needs to:

1. Determine which AWS account “Prod” refers to

2. Assume a role in that account with read permissions

3. Execute the AWS API call with the assumed credentials

4. Return the results

The obvious approach is to use `aws sts assume-role` to get temporary credentials, then use those credentials in subsequent commands. This doesn’t work with the AWS CLI MCP server because it’s stateless - each command execution creates a fresh boto3 session. The MCP server can’t “remember” credentials from a previous assume-role call.

Bummer

Another approach would be creating a separate MCP client instance, one per account we might need to speak to, each configured with a different AWS profile. This creates tool sprawl and of course doesn’t scale well.

Double bummer

What we need is profile-based role assumption where the bot includes `--profile oak` in its AWS CLI commands, and the profile configuration automatically handles assuming the correct role using the Lambda execution role as the base credential source.

Why This Is Complicated

The AWS CLI MCP server uses boto3, not shell commands. When you pass `aws eks list-clusters --profile oak`, the MCP server:

1. Parses the command string to extract the profile name

2. Creates a boto3 Session with that profile

3. Loads profile configuration from the AWS config file

4. Resolves credentials according to the profile’s settings

5. Executes the API call

Each of these steps has failure modes that produce silent errors - boto3 just fails without helpful error messages. We’ll cover the debugging later.

The key insight: the `--profile` flag does work in the AWS CLI MCP server. It extracts the profile from the parsed command and passes it to `boto3.Session(profile_name=...)`. We just need to configure profiles correctly.

Lets do it!

Understanding How the AWS CLI MCP Works

The first thing to understand is that the AWS CLI MCP server doesn’t shell out to the AWS CLI binary. It uses boto3 directly. This is important because it means we need to think about boto3 session management, not shell command execution.

When you send `aws eks list-clusters --profile oak` to the MCP server, heres what actually happens under the hood:

The server parses the command string and extracts the profile name from the `--profile` flag. I actually went digging through the source code to confirm this because the documentation wasn’t clear. In the parser.py file, theres code that does:

profile = getattr(global_args, ‘profile’, None)Then in driver.py, when creating credentials:

credentials = credentials or get_local_credentials(

profile=translation.command.profile or AWS_API_MCP_PROFILE_NAME

)This means the `--profile` flag IS recognized and used. The server creates a fresh boto3 session for each command using that profile name.

Great, so profiles work

Now we need to understand where boto3 gets profile information from.

AWS Config File vs Credentials File

Boto3 looks in two places for configuration:

1. `~/.aws/credentials` - Contains access keys (AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY)

2. `~/.aws/config` - Contains profile settings like region, role_arn, and credential sources

By default, boto3 only reads the credentials file. To make it read the config file, you need to set the environment variable `AWS_SDK_LOAD_CONFIG=1`.

This is critical and not obvious

Our profiles with role assumption settings live in the config file, so without this environment variable, boto3 cant see them.

Lambda Credentials and credential_source

When your Lambda function runs, AWS provides temporary credentials via environment variables:

- AWS_ACCESS_KEY_ID

- AWS_SECRET_ACCESS_KEY

- AWS_SESSION_TOKEN

These credentials belong to the Lambda execution role. In our case, thats the role `Ue1LiVeraResearchTestWorkerRole`.

In the AWS config file, you can tell a profile to use credentials from the environment with:

[profile oak]

role_arn = arn:aws:iam::123456789:role/read-only-role

credential_source = EnvironmentThis tells boto3: “Get the base credentials from environment variables, then use those to assume the role specified in role_arn.”

The Two-Step Credential Flow

Heres the complete flow:

1. Lambda execution role provides credentials via environment variables

2. Bot sends: `aws eks list-clusters --profile oak`

3. MCP server extracts `--profile oak` from the command

4. Boto3 creates a session with `profile_name=’prod’`

5. Boto3 reads `~/.aws/config` and finds `[profile oak]`

6. Sees `credential_source = Environment` and loads AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY, AWS_SESSION_TOKEN from environment

7. Uses those credentials to call `sts:AssumeRole` for the role_arn

8. Gets back temporary credentials for the assumed role

9. Executes `eks:ListClusters` with the assumed role credentials

The key insight here is that credential_source = Environment lets us chain roles: Lambda role → assumed role.

What About source_profile?

You might think you could do this instead:

[profile default]

credential_source = Environment

[profile oak]

role_arn = arn:aws:iam::123456789:role/read-only-role

source_profile = defaultThis doesn’t work. When a profile has `credential_source = Environment`, it cant be used as a source_profile. AWS SDK documentation mentions this but its easy to miss.

I spent hours debugging this

Each profile needs its own `credential_source = Environment` setting directly. No chaining through a default profile.

Now that we understand how boto3 resolves profiles and credentials, lets build the actual implementation.

Building the Solution

We need to configure everything so that when the bot says `aws eks list-clusters --profile oak`, it automatically assumes the correct role in the Oak account and returns results.

Heres what we need to build:

1. An AWS config file with profiles for each account

2. A Dockerfile that pre-installs the MCP server and copies the config

3. A Python worker module that sets up the MCP client with correct environment variables

4. IAM permissions on both sides of the trust relationship

Lets go through each piece.

The AWS Config File

Create a file called `aws_config` in your Lambda project:

# AWS Config for Multi-Account Access

[profile oak]

role_arn = arn:aws:iam::111111111111:role/read-only-role

role_session_name = MyBot

region = us-east-1

credential_source = Environment

[profile birch]

role_arn = arn:aws:iam::222222222222:role/read-only-role

role_session_name = MyBot

region = us-east-1

credential_source = Environment

[profile palm]

role_arn = arn:aws:iam::333333333333:role/read-only-role

role_session_name = MyBot

region = us-east-1

credential_source = EnvironmentKey points:

- Each profile is self-contained with `credential_source = Environment`

- No `[profile default]` needed

- `role_arn` points to the read-only role in each target account

- `role_session_name` can be anything - its useful for CloudTrail auditing

- Replace the account IDs with your actual account IDs

The Dockerfile

We need to pre-install the AWS CLI MCP server and copy our config file:

# Pre-install AWS CLI MCP server

RUN mkdir -p /opt/aws-cli-mcp-server && \

cd /opt/aws-cli-mcp-server && \

uv venv && \

uv pip install --python .venv/bin/python awslabs.aws-api-mcp-server && \

chmod -R a+rX /opt/aws-cli-mcp-server

# Copy AWS CLI config with pre-configured profiles

COPY aws_config /opt/aws_configWhy `/opt` instead of `/tmp`? Lambda clears `/tmp` on every cold start, but I can read from `/opt`. The worker will copy it to `/tmp` at runtime since boto3 needs write access to the directory.

Also note: I tried copying the entire venv to `/tmp` and ran out of disk space. Lambda gives you 512MB in `/tmp` by default and the MCP server venv is huge. Running from `/opt` works fine.

The Worker Module

Create `worker_mcp_aws_cli.py`:

| def initial_aws_cli_mcp_client(aws_region): | |

| ... | |

| env = { | |

| "HOME": "/tmp", | |

| "AWS_REGION": aws_region, | |

| "AWS_CONFIG_FILE": "/tmp/.aws/config", | |

| "AWS_API_MCP_WORKING_DIR": "/tmp/aws-mcp-working", | |

| "AWS_SDK_LOAD_CONFIG": "1", | |

| "PYTHONUNBUFFERED": "1", | |

| # Pass through Lambda execution role credentials | |

| "AWS_ACCESS_KEY_ID": os.environ.get("AWS_ACCESS_KEY_ID", ""), | |

| "AWS_SECRET_ACCESS_KEY": os.environ.get("AWS_SECRET_ACCESS_KEY", ""), | |

| "AWS_SESSION_TOKEN": os.environ.get("AWS_SESSION_TOKEN", ""), | |

| } | |

| return MCPClient( | |

| lambda: stdio_client( | |

| StdioServerParameters( | |

| cwd="/tmp/aws-mcp-working", | |

| command=f"{opt_aws_cli_mcp_dir}/.venv/bin/awslabs.aws-api-mcp-server", | |

| env=env, | |

| ) | |

| ) | |

| ) | |

| def build_aws_cli_mcp_client(aws_region="us-east-1"): | |

| aws_cli_mcp_client = initial_aws_cli_mcp_client(aws_region=aws_region) | |

| aws_cli_client = aws_cli_mcp_client.__enter__() | |

| all_aws_cli_tools = aws_cli_client.list_tools_sync() | |

| print(f"AWS CLI MCP: Returning {len(all_aws_cli_tools)} tools") | |

| return aws_cli_mcp_client, all_aws_cli_tools |

Critical parts:

1. Copy config to /tmp - boto3 needs the config file in a writable location

2. AWS_SDK_LOAD_CONFIG=1 - Without this, boto3 ignores the config file entirely

3. Pass through credentials - The subprocess doesn’t inherit environment automatically when you provide a custom env dict. You must explicitly pass AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY, and AWS_SESSION_TOKEN

This one got me for like 2 hours. Most lambda processes automatically inherit the IAM role assigned to the lambda. Not this tool, BIG SIGH

I initially didn’t pass through the credentials and boto3 just silently failed with no helpful error messages.

Integrating with Strands

In your main agent setup (like `worker_agent.py`), integrate the MCP client:

from worker_mcp_aws_cli import build_aws_cli_mcp_client

# Build AWS CLI MCP client

aws_cli_mcp_client, aws_cli_tools = build_aws_cli_mcp_client(aws_region=”us-east-1”)

# Prefix tool names to avoid collisions

aws_cli_tools = add_prefix_to_mcp_tools(aws_cli_tools, “aws”)

# Add to agent tools

tools.extend(aws_cli_tools)The tool prefixing gives you `aws_call_aws` and `aws_suggest_aws_commands` instead of just `call_aws`. This prevents collisions if you have multiple MCP servers.

IAM Permissions - Both Sides Must Agree

For cross-account role assumption to work, you need permissions configured on both sides.

So the IAM role you assign to the lambda must permit assuming an IAM role, and the IAM role must permit you to assume it. If either is wrong, the assuming doesn’t work.

This is hard enough to debug in a regular application, but a HUGE pain when your AI eats errors and doesn’t print them

Testing It

At this point, you should be able to deploy your Lambda and test:

Bot receives: “List EKS clusters in the Oak account”

Bot constructs: `aws eks list-clusters --region us-east-1 --profile oak`

MCP server:

1. Parses `--profile oak`

2. Loads `/tmp/.aws/config`

3. Finds `[profile oak]` with `credential_source = Environment`

4. Gets Lambda credentials from environment variables

5. Calls `sts:AssumeRole` for `arn:aws:iam::111111111111:role/read-only-role`

6. Gets temporary credentials

7. Executes `eks:ListClusters` with assumed credentials

8. Returns results

Beautiful

Except when it doesn’t work. Lets talk about debugging.

The Debugging Journey

When I first deployed this, nothing worked. The bot would try to list resources and just get generic “Unable to locate credentials” errors. Not helpful.

The problem with boto3 and the AWS SDK is that errors are often silent or misleading. A missing environment variable produces the same error as a malformed config file or insufficient IAM permissions.

Failure 1: boto3 Cant Find the Config File

First test: bot tries `aws eks list-clusters --profile oak`.

Error: `Unable to locate credentials. You can configure credentials by running “aws configure”.`

This error is lying. The credentials exist (Lambda execution role). The real problem: boto3 isn’t reading the config file at all.

I added debug logging to print the contents of `/tmp/.aws/config` right after copying it. The file was there. The profiles were correct. boto3 just wasnt looking at it.

The fix: `AWS_SDK_LOAD_CONFIG=1`

Without this environment variable, boto3 ONLY reads `~/.aws/credentials` and completely ignores `~/.aws/config`. Since our profiles live in the config file, boto3 had no idea they existed.

After adding that one line to the env dict, profiles started working.

One down

Failure 2: Subprocess Cant See Lambda Credentials

Second test after adding `AWS_SDK_LOAD_CONFIG=1`: same error.

Wait, what? I just fixed this.

Turns out when you pass a custom `env` dictionary to `StdioServerParameters`, Python doesn’t merge it with the parent process environment. It REPLACES the environment entirely.

The MCP server subprocess was starting with ONLY the variables I explicitly provided:

- HOME

- AWS_REGION

- AWS_CONFIG_FILE

- AWS_SDK_LOAD_CONFIG

- AWS_API_MCP_WORKING_DIR

Missing: AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY, AWS_SESSION_TOKEN

Lambda provides these credentials to the parent process, but the subprocess couldnt see them. So when boto3 tried to read `credential_source = Environment`, there were no credentials in the environment.

The fix: explicitly pass through the credentials

env = {

# ... other variables ...

“AWS_ACCESS_KEY_ID”: os.environ.get(”AWS_ACCESS_KEY_ID”, “”),

“AWS_SECRET_ACCESS_KEY”: os.environ.get(”AWS_SECRET_ACCESS_KEY”, “”),

“AWS_SESSION_TOKEN”: os.environ.get(”AWS_SESSION_TOKEN”, “”),

}This grabs the credentials from the parent Lambda environment and explicitly passes them to the MCP server subprocess.

After this change, role assumption started working.

Two down

Debugging Tips

If youre building something similar, these techniques helped me:

1. Add debug logging everywhere - Print environment variables (redact sensitive values), print file contents, print boto3 session configuration

2. Test incrementally - I tested after each change: config file copied? Environment variable set? Credentials passed through?

3. Read the source code - The AWS CLI MCP server is open source. When documentation was unclear, I read driver.py and parser.py directly

4. Use CloudTrail - Failed AssumeRole attempts show up in CloudTrail in the target account with detailed error messages

The key lesson: boto3 fails silently. You need to instrument your code with logging to understand whats actually happening.

Summary

So thats it. We now have a single AI agent running in Lambda that can query resources across any number of AWS accounts using profile-based role assumption.

The AWS CLI MCP server does support --profile flags, even though its not well documented. You just need to configure everything correctly for boto3 to work.

The full code is in my GitHub/KyMidd/SlackStrandsAgenticBot repo if you want to see the complete implementation.

Now my bot can answer “How many EKS clusters are in the Oak account?” and actually get the answer. Pretty cool.

Good luck out there.

kyler